library(tidyverse)

library(palmerpenguins)

if (!file.exists("data/galton.csv")) {

download.file(url = "https://raw.githubusercontent.com/CU-F24-MDSSB-01-Concepts-Tools/Website/refs/heads/main/data/galton.csv",

destfile = "data/galton.csv")

}

if (!file.exists("data/Viertelfest.csv")) {

download.file(url = "https://raw.githubusercontent.com/CU-F24-MDSSB-01-Concepts-Tools/Website/refs/heads/main/data/Viertelfest.csv",

destfile = "data/Viertelfest.csv")

}

galton <- read_csv("data/galton.csv")

viertel <- read_csv("data/Viertelfest.csv")W#05: Descriptive Statistics, Exploratory Data Analysis

Preliminaries

In this lectures we will use these packages and datasets. You need to do this code in the Console to download data and play with some of the code in this lecture.

Descriptive Statistics

Descriptive vs. Inferential Statistics

- The process of using and analyzing summary statistics

- Solely concerned with properties of the observed data.

- Distinct from inferential statistics:

- Inference of properties of an underlying distribution given sampled observations from a larger population.

Summary Statistics are used to summarize a set of observations to communicate the largest amount of information as simple as possible.

Summary statistics

Univariate (for one variable)

- Measures of location, or central tendency

- Measures of statistical dispersion

- Measure of the shape of the distribution like skewness or kurtosis

Bivariate (for two variables)

- Measures of statistical dependence or correlation

Measures of central tendency

Measures of central tendency

Goal: For a sequence of numerical observations \(x_1,\dots,x_n\) we want to measure

- the “typical” value.

- a value summarizing the location of values on the numerical axis.

Three different ways:

- Arithmetic mean (also mean, average): Sum of the all observations divided by the number of observations \(\frac{1}{n}\sum_{i=1}^n x_i\)

- Median: Assume \(x_1 \leq x_2 \leq\dots\leq x_n\). Then the median is middlemost values in the sequence \(x_\frac{n+1}{2}\) when \(n\) odd. For \(n\) even there are two middlemost values and the median is \(\frac{x_\frac{n}{2} + x_\frac{n+1}{2}}{2}\)

- Mode: The value that appears most often in the sequence.

Measures of central tendency: Examples

[1] 63.4[1] 4[1] 3.5[1] 5.5Median of an even number of numbers: Mean of two most central numbers.

Philosophy of aggregation

- The mean represents total value per value.

Example: per capita income in a town is the total income per individual - The median represents the value such that half of the values are lower and higher.

In a democracy where each value is represented by one voter preferring it, the median is the value which is unbeatable by an absolute majority. Half of the people prefer higher the other half lower values. (Median voter model) - The mode represents the most common value.

In a democracy, the mode represents the winner of a plurality vote where each value runs as a candidate and the winner is the one with the most votes.

Mean, Median, Mode properties

Do they deliver one unambiguous answer for any sequence?

Mean and median, yes.

The mode has no rules for a tie.

Can they by generalized to variables with ordered or even unordered categories?

Mean: No.

Median: For ordered categories (except when even number and the two middlemost are not the same)

Mode: For any categorical variable.

Is the measure always also in the data sequence?

Mean: No.

Median: Yes, for sequences of odd length.

Mode: Yes.

Generalized means1

For \(x_1, \dots, x_n > 0\) and \(p\in \mathbb{R}_{\neq 0}\) the generalized mean is

\[M_p(x_1, \dots, x_n) = (\frac{1}{n}\sum_{i=1}^n x_i^p)^\frac{1}{p}\]

For \(p = 0\) it is \(M_0(x_1, \dots, x_n) = (\prod_{i=1}^n x_i)^\frac{1}{n}\).

\(M_1\) is the arithmetic mean. \(M_0\) is called the geometric mean. \(M_{-1}\) the harmonic mean.

Note: Generalized means are often only reasonable when all values are positive \(x_i > 0\).

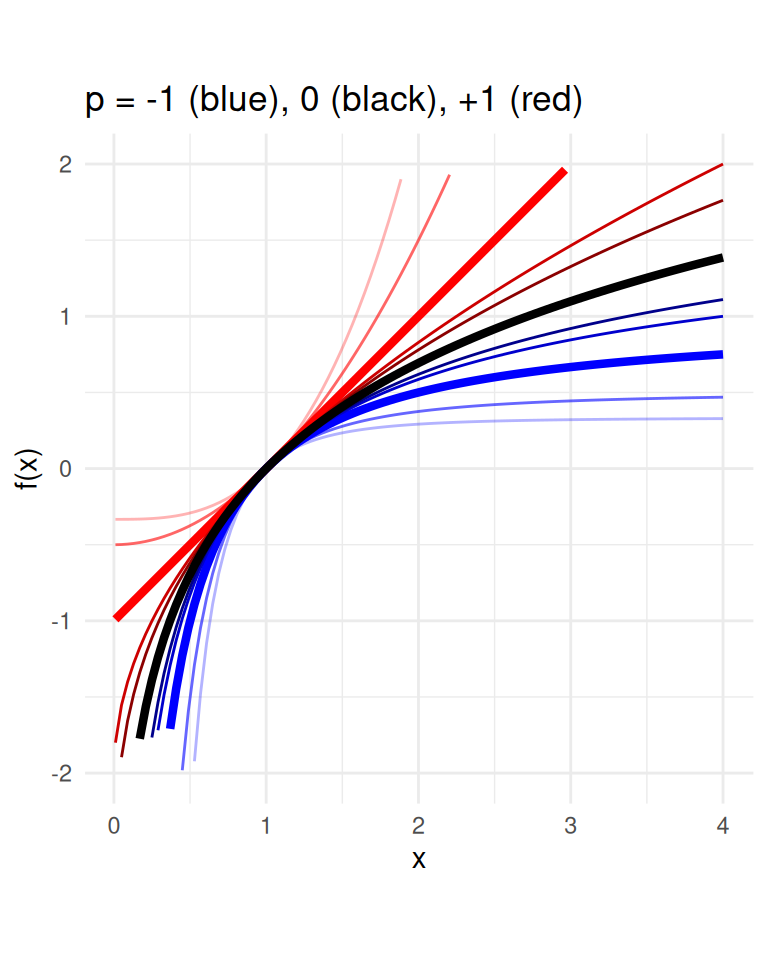

Box-Cox transformation function

For \(p \in \mathbb{R}\): \(f(x) = \begin{cases}\frac{x^p - 1}{p} & \text{for $p\neq 0$} \\ \log(x) & \text{for $p= 0$}\end{cases}\)

The \(p\)-mean is

\[M_p(x) = f^{-1}(\frac{1}{n}\sum_{i=1}^n f(x_i))\]

with \(x = [x_1, \dots, x_n]\). \(f^{-1}\) is the inverse of \(f\).

- Inverse means \(f^-1(f(x)) = x =f(f^-1(x))\).

- Box-Cox is a common transformation in data pre-processing to make the variable’s (arithmetic) mean being a “good” measure of central tendency.

pfun <- function(x, p) (x^p-1)/p

ipfun <- function(x, p) (p*x + 1)^(1/p)

ggplot() +

geom_function(fun = pfun, args = list(p = 1), color="red", size = 1.5) +

geom_function(fun = pfun, args = list(p = 2), color = "red", alpha=0.6) +

geom_function(fun = pfun, args = list(p = 3), color = "red", alpha=0.3) +

geom_function(fun = pfun, args = list(p = 1/2), color = "red3") +

geom_function(fun = pfun, args = list(p = 1/3), color = "red4") +

geom_function(fun = pfun, args = list(p = -1), color = "blue", size = 1.5) +

geom_function(fun = pfun, args = list(p = -1/2), color = "blue3") +

geom_function(fun = pfun, args = list(p = -1/3), color = "blue4") +

geom_function(fun = pfun, args = list(p = -2), color = "blue", alpha=0.6) +

geom_function(fun = pfun, args = list(p = -3), color = "blue", alpha=0.3) +

geom_function(fun = log, color = "black", size = 1.5) + coord_fixed() +

xlim(c(0.01,4)) + ylim(c(-2,2)) +

labs(x="x", y = "f(x)", title = "p = -1 (blue), 0 (black), +1 (red)") +

theme(title = element_text(size = 2)) +

theme_minimal()

Measures of central tendency and the Wisdom of the Crowd

Application: The Wisdom of the Crowd

- Phenomenon: When collective estimate of a diverse group of independent individuals is better than that of single experts.

- The classical wisdom-of-the-crowds finding is about point estimation of a continuous quantity.

- Popularized by James Surowiecki (2004).

- The opening anecdote is about Francis Galton’s1 surprise in 1907 that the crowd at a county fair accurately guessed the weight of an ox’s meat when their individual guesses were averaged.

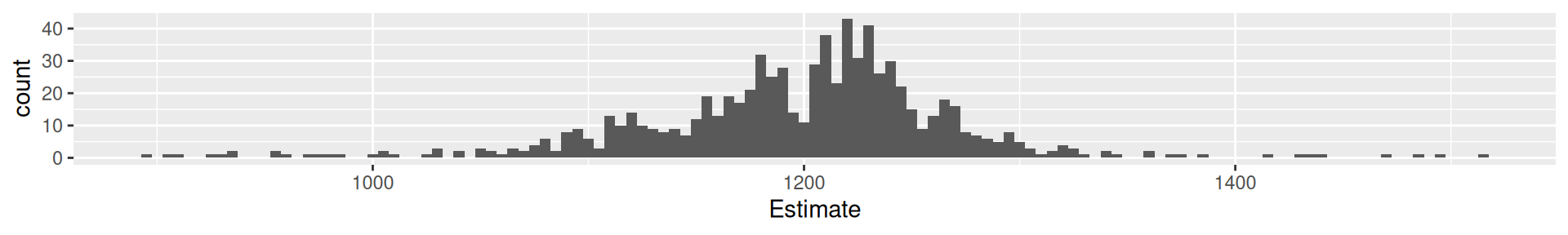

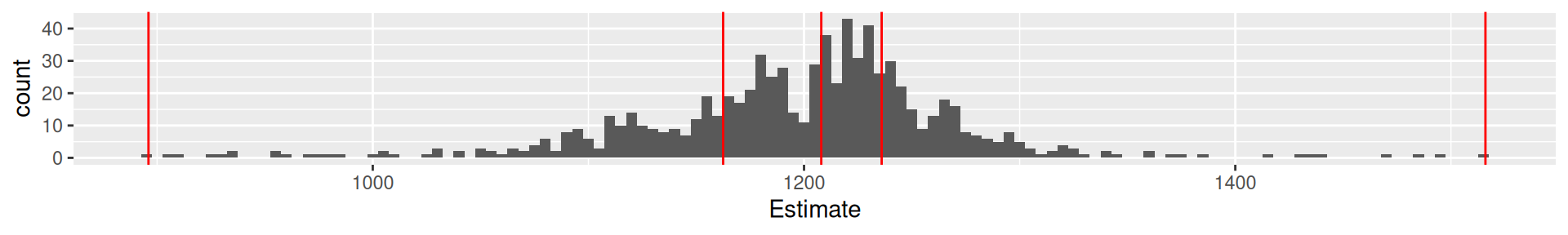

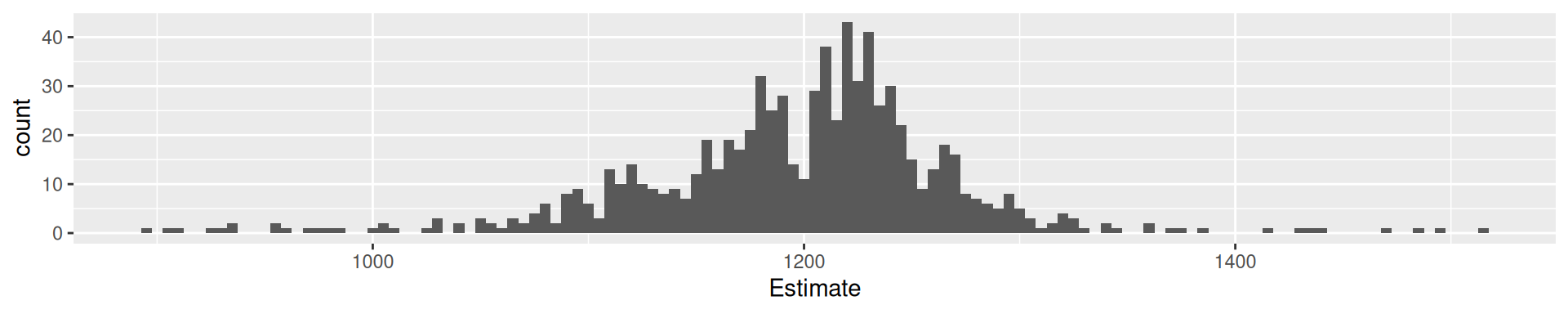

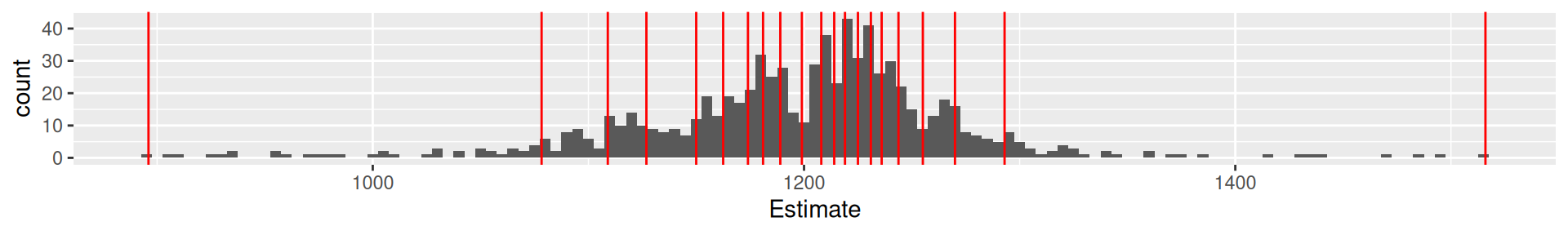

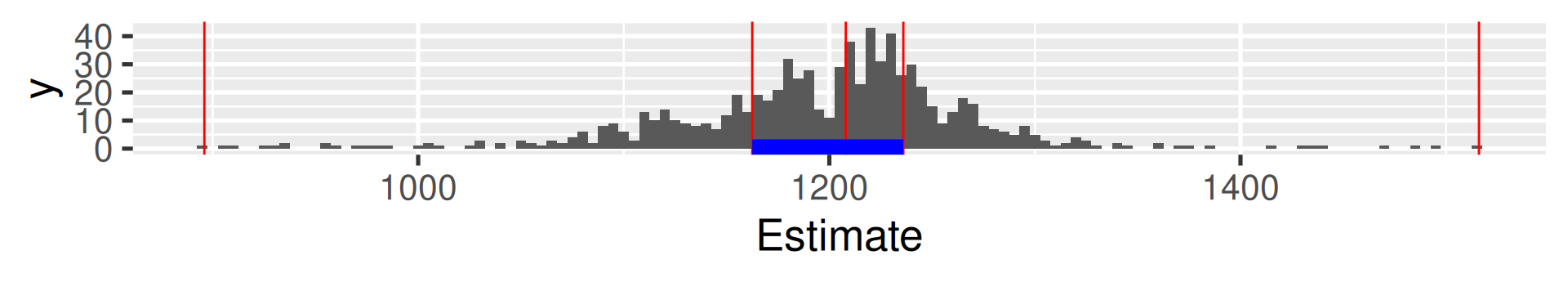

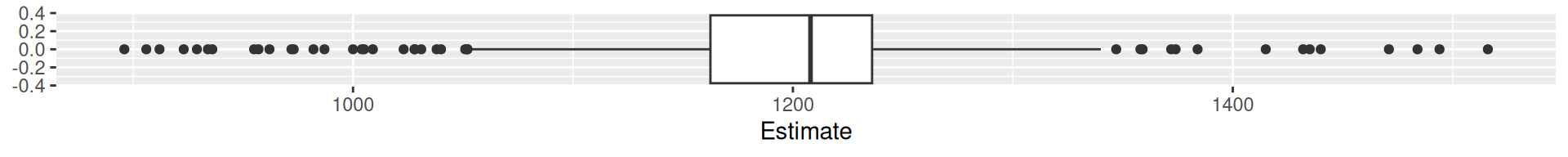

Galton’s data1

What is the weight of the meat of this ox?

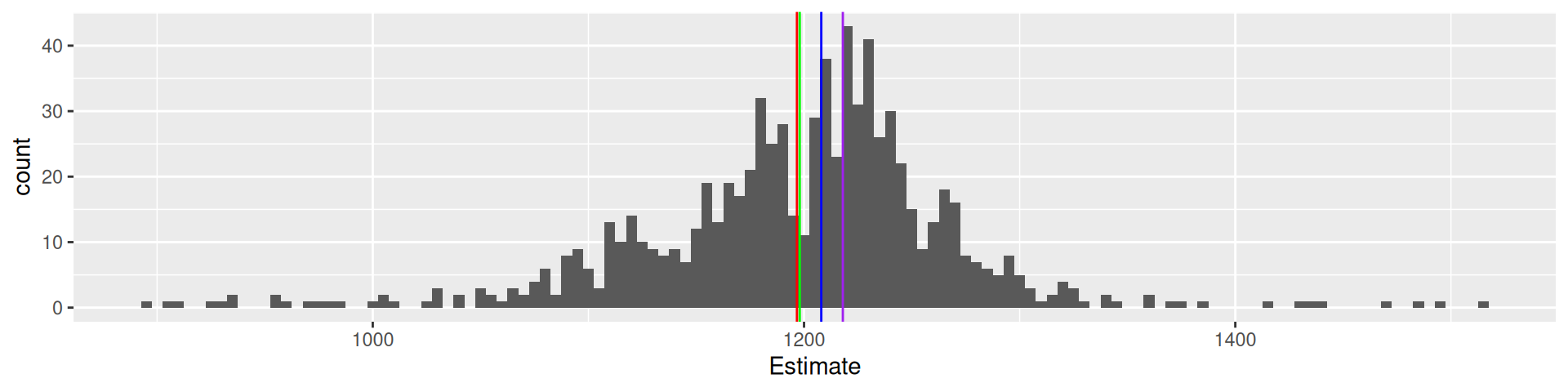

galton |> ggplot(aes(Estimate)) + geom_histogram(binwidth = 5) +

geom_vline(xintercept = 1198, color = "green") +

geom_vline(xintercept = mean(galton$Estimate), color = "red") +

geom_vline(xintercept = median(galton$Estimate), color = "blue") +

geom_vline(xintercept = Mode(galton$Estimate), color = "purple")

787 estimates, true value 1198, mean 1196.7, median 1208, mode 1218

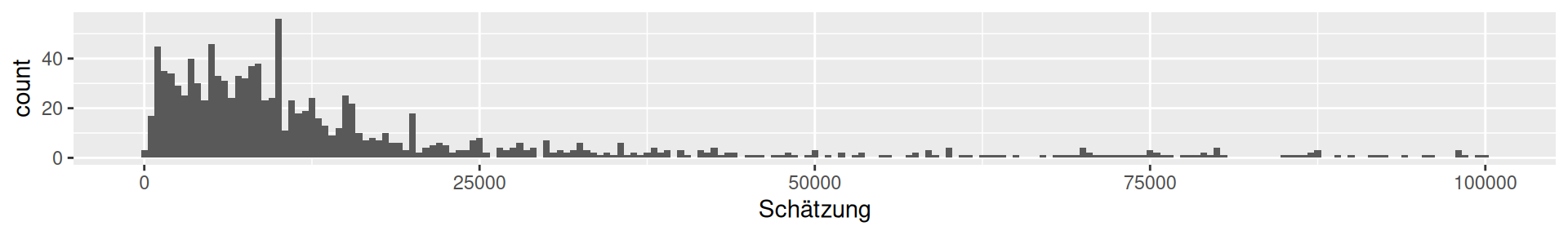

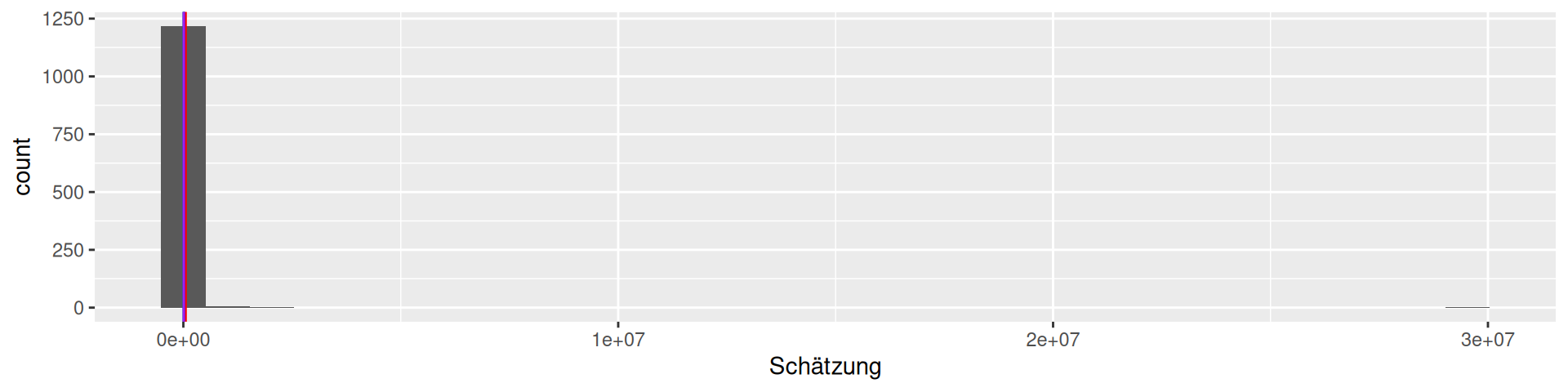

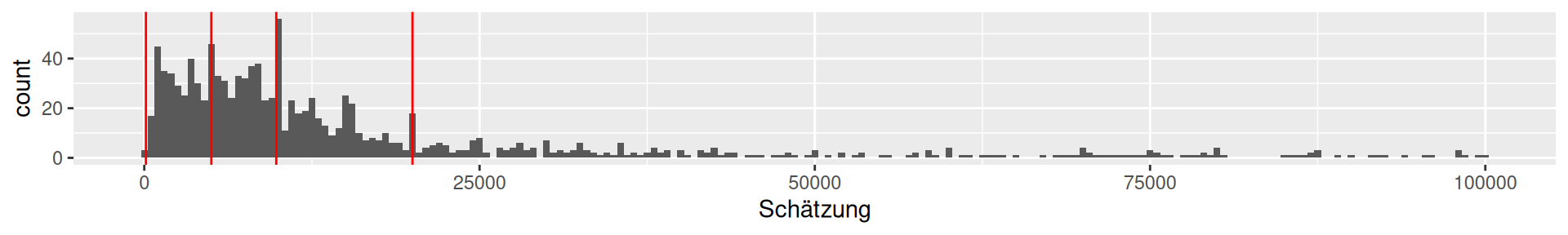

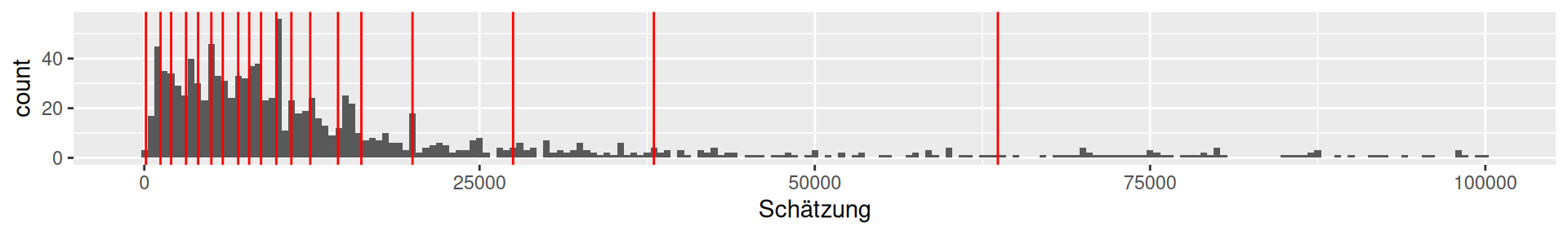

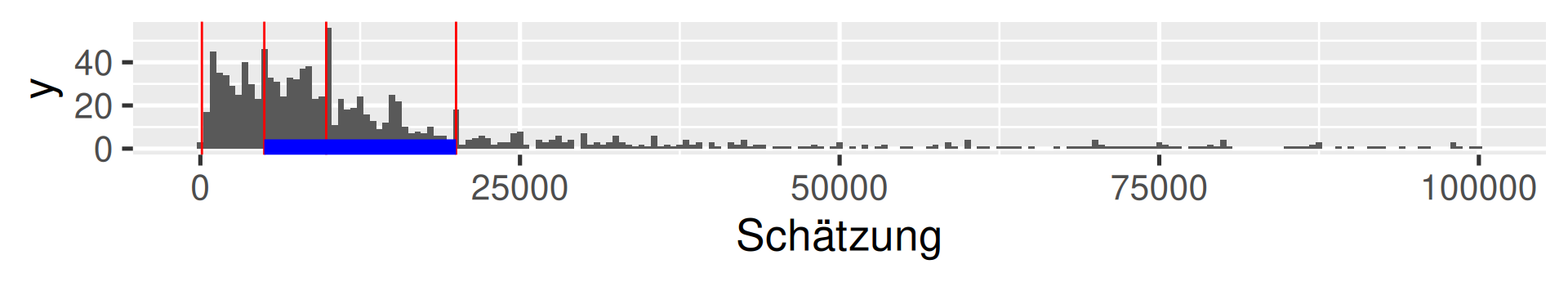

Viertelfest Bremen 20081

How many lots will be sold by the end of the festival?

viertel |> ggplot(aes(`Schätzung`)) + geom_histogram() +

geom_vline(xintercept = 10788, color = "green") +

geom_vline(xintercept = mean(viertel$Schätzung), color = "red") +

geom_vline(xintercept = median(viertel$Schätzung), color = "blue") +

geom_vline(xintercept = Mode(viertel$Schätzung), color = "purple")

1226 estimates, the maximal value is 29530000! We should filter …

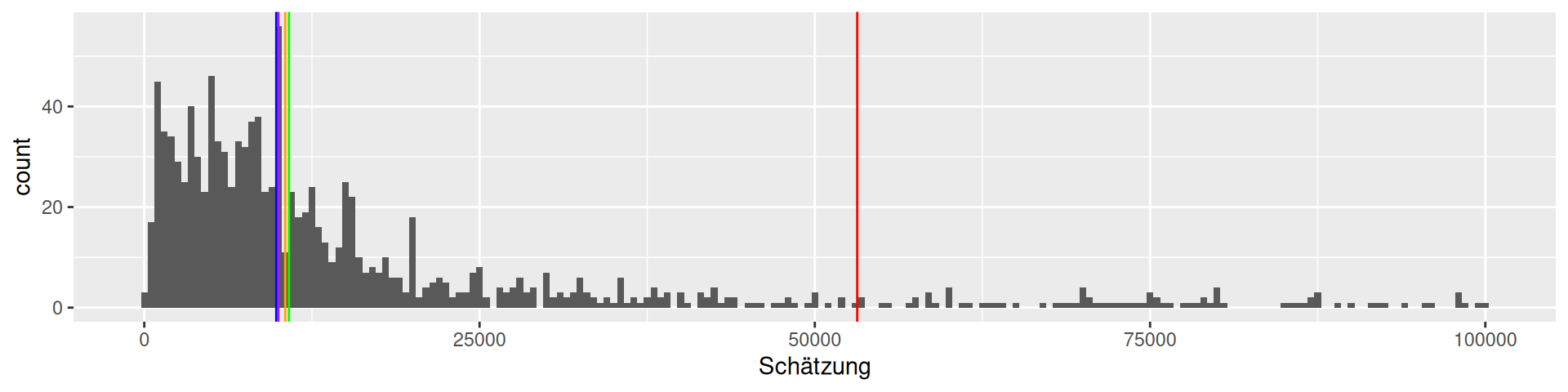

Viertelfest Bremen 2008

How many lots will be sold by the end of the festival?

viertel <- read_csv("data/Viertelfest.csv")

viertel |> filter(Schätzung<100000) |> ggplot(aes(`Schätzung`)) + geom_histogram(binwidth = 500) +

geom_vline(xintercept = 10788, color = "green") +

geom_vline(xintercept = mean(viertel$Schätzung), color = "red") +

geom_vline(xintercept = median(viertel$Schätzung), color = "blue") +

geom_vline(xintercept = Mode(viertel$Schätzung), color = "purple") +

geom_vline(xintercept = exp(mean(log(viertel$Schätzung))), color = "orange")

1226 estimates, true value 10788, mean 53163.9, median 9843, mode 10000,

geometric mean 10510.1

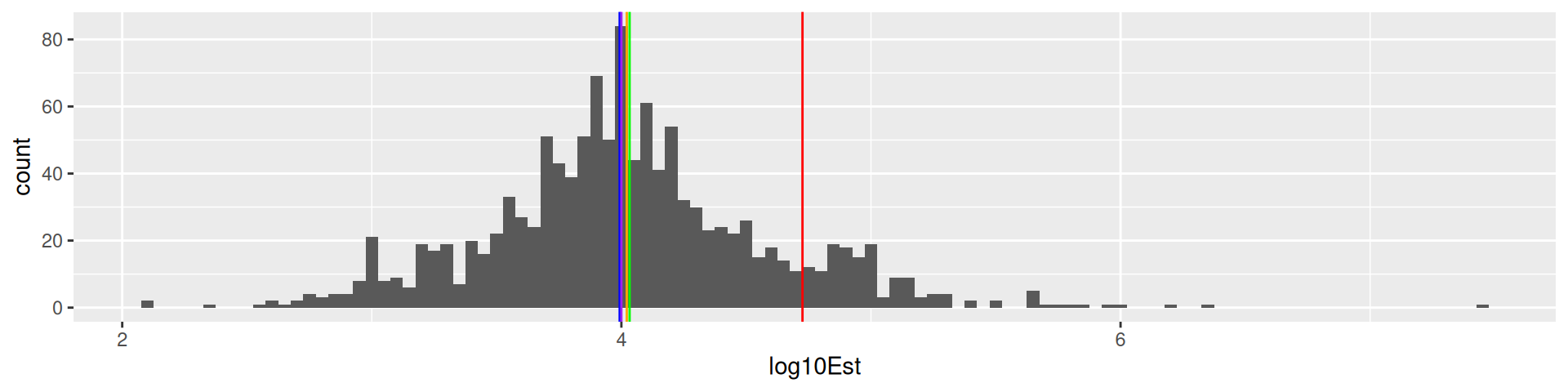

\(\log_{10}\) transformation Viertelfest

viertel |> mutate(log10Est = log10(Schätzung)) |> ggplot(aes(log10Est)) + geom_histogram(binwidth = 0.05) +

geom_vline(xintercept = log10(10788), color = "green") +

geom_vline(xintercept = log10(mean(viertel$Schätzung)), color = "red") +

geom_vline(xintercept = log10(median(viertel$Schätzung)), color = "blue") +

geom_vline(xintercept = log10(Mode(viertel$Schätzung)), color = "purple") +

geom_vline(xintercept = mean(log10(viertel$Schätzung)), color = "orange")

1226 estimates, true value 10788, mean 53163.9, median 9843, mode 10000,

geometric mean 10510.1

Wisdom of the crowd insights

- In Galton’s sample the different measures do not make a big difference

- In the Viertelfest data the arithmetic mean performs very bad!

- The mean is vulnerable to extreme values.

Quoting Galton on the mean as a democratic aggregation function:

“The mean gives voting power to the cranks in proportion to their crankiness.” - The mode tends to be on focal values as round numbers (10,000). In Galton’s data this is not so pronounced beause estimators used several weight units (which Galton converted to pounds).

- How to choose a measure to aggregate the wisdom?

- By the nature of the estimate problem? Is the scale mostly clear? (Are we in the hundreds, thousands, ten thousands, …)

- By the nature of the distribution?

- There is no real insurance against a systematic bias in the population.

Measures of dispersion

Measures of dispersion1

Goal: We want to measure

- How spread out values are around the central tendency.

- How stretched or squeezed is the distribution?

Variance is the mean of the squared deviation from the mean: \(\text{Var}(x) = \frac{1}{n}\sum_{i=1}^n(x_i - \mu)^2\) where \(\mu\) (mu) is the mean.

Standard deviation is the square root of the variance \(\text{SD}(x) = \sqrt{\text{Var}(x)}\).

The standard deviation is often denoted \(\sigma\) (sigma) and the variance \(\sigma^2\).

Mean absolute deviation (MAD) is the mean of the absolute deviation from the mean: \(\text{MAD}(x) = \frac{1}{n}\sum_{i=1}^n|x_i - \mu|\).

Warning: MAD can also be Median absolute deviation from the median.

Range is the difference of the maximal and the minimal value \(\max(x) - \min(x)\).

Examples of measures of dispersion

[1] 719774887849[1] 848395.5[1] 8771.803[1] 120 29530000[1] 29529880Normalization of variables

In Machine Learning, Statistics, and Descripitve Analysis we often want to bring different variables to common scales. We want to make the dispersion and the location comparable.

To that end, some linear transformation are common:

- Standardization

- Min-max Feature Scaling

When we normalize a variable we receive a dimensionless variable. It does not have a unit.

Example: We measure height in \(m\) meters. When we standardize are scale by min-max the new variable has no unit. Mathematically it cancels out.

Standardization

Variables are standardized by subtracting their mean and then dividing by their standard deviations.

A value from a standardized variable is called a standard score or z-score.

\(z_i = \frac{x_i - \mu}{\sigma}\)

where \(\mu\) is the mean and \(\sigma\) the standard deviation of the vector \(x\).

- This is a shift-scale transformation. We shift each value by the mean and scale by the standard deviation.

- A standard score \(z_i\) represents how many standard deviations \(x_i\) is away from the mean of \(x\).

- The standard scores \(z_i\)’s have a mean of zero and a standard deviation of one (by construction).

Min-max Feature Scaling

When we want to make the values of the scaled variable to range from zero to one.

The transformed variable values \(y_i\)’s are

\[y_i = \frac{x_i - \min(x)}{\max(x) - \min(x)}.\]

We shift by the minimum \(\min(x)\) and scale by the range \(\max(x) - \min(x)\).

- What are mean and standard deviation of \(y\)? We do not know, but less than one.

- Caution: The new values are heavily dependent of the actual values of \(\min\) and \(\max\)!

Data Overview with summary

Data Sets 1a and 1b: Widsom of Crowd

1a: Ox weigh guessing competition 1907 (collected by Galton)

1b: Viertelfest “guess the number of sold lots”-competition 2009

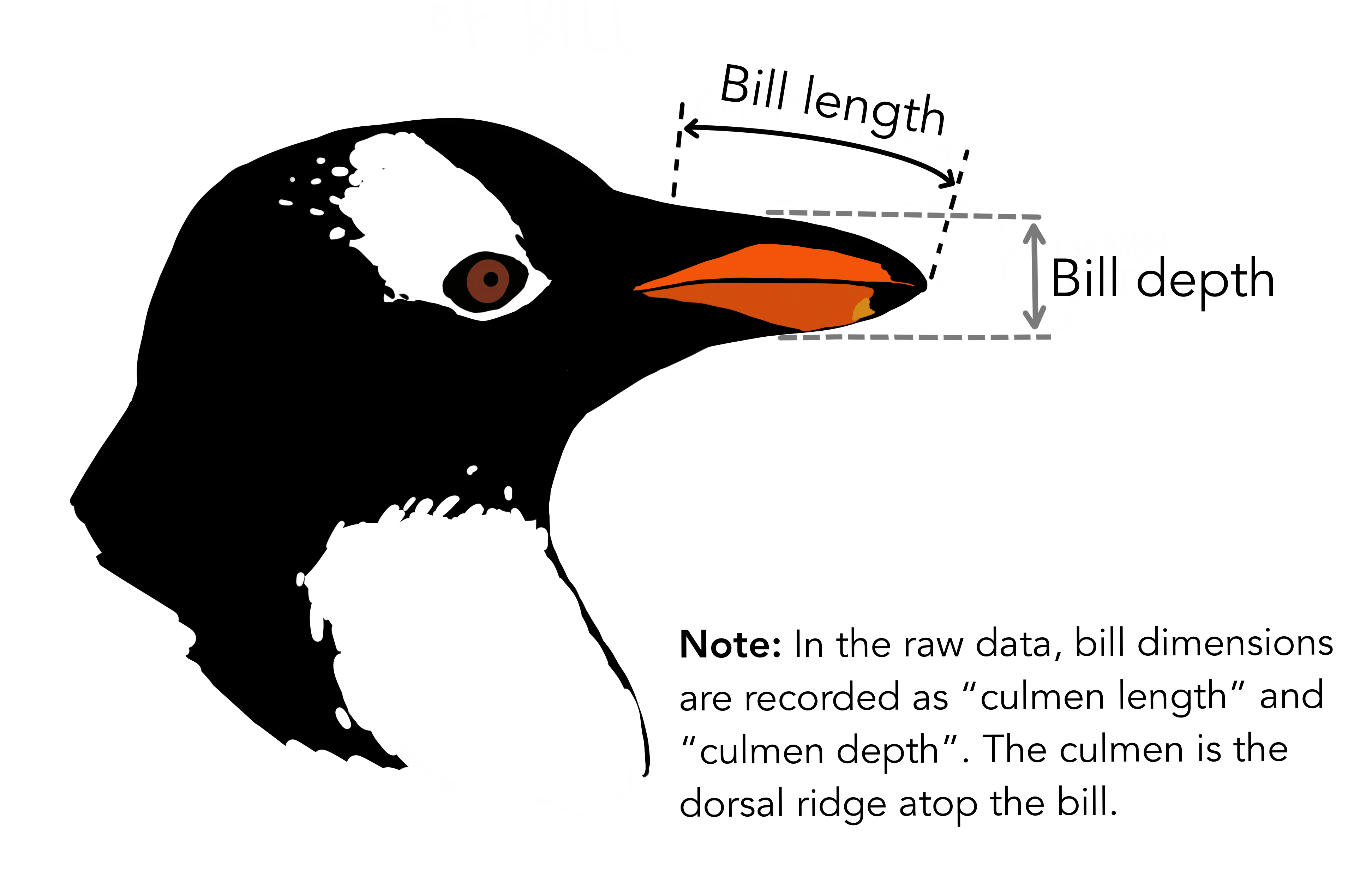

Data Set 2: Palmer Penguins

Chinstrap, Gentoo, and Adélie Penguins

# A tibble: 344 × 8

species island bill_length_mm bill_depth_mm flipper_length_mm body_mass_g

<fct> <fct> <dbl> <dbl> <int> <int>

1 Adelie Torgersen 39.1 18.7 181 3750

2 Adelie Torgersen 39.5 17.4 186 3800

3 Adelie Torgersen 40.3 18 195 3250

4 Adelie Torgersen NA NA NA NA

5 Adelie Torgersen 36.7 19.3 193 3450

6 Adelie Torgersen 39.3 20.6 190 3650

7 Adelie Torgersen 38.9 17.8 181 3625

8 Adelie Torgersen 39.2 19.6 195 4675

9 Adelie Torgersen 34.1 18.1 193 3475

10 Adelie Torgersen 42 20.2 190 4250

# ℹ 334 more rows

# ℹ 2 more variables: sex <fct>, year <int>summary from base R

Shows summary statistics for the values in a vector

Or for all columns in a data frame

species island bill_length_mm bill_depth_mm

Adelie :152 Biscoe :168 Min. :32.10 Min. :13.10

Chinstrap: 68 Dream :124 1st Qu.:39.23 1st Qu.:15.60

Gentoo :124 Torgersen: 52 Median :44.45 Median :17.30

Mean :43.92 Mean :17.15

3rd Qu.:48.50 3rd Qu.:18.70

Max. :59.60 Max. :21.50

NA's :2 NA's :2

flipper_length_mm body_mass_g sex year

Min. :172.0 Min. :2700 female:165 Min. :2007

1st Qu.:190.0 1st Qu.:3550 male :168 1st Qu.:2007

Median :197.0 Median :4050 NA's : 11 Median :2008

Mean :200.9 Mean :4202 Mean :2008

3rd Qu.:213.0 3rd Qu.:4750 3rd Qu.:2009

Max. :231.0 Max. :6300 Max. :2009

NA's :2 NA's :2 Question

What does

1st Qu. and

3rd Qu. mean?

Quantiles

Quantiles

Cut points specifying intervals which contain equal amounts of values of the distribution.

\(q\)-quantiles divide numbers into \(q\) intervals covering all values.

The quantiles are the cut points: For \(q\) intervals there are \(q-1\) cut points of interest.

- The one 2-quantile is the median

- The three 4-quantiles are called quartiles

1st Qu.is the first quartile- The second quartile is the median

3rd Qu.is the third quartile

- 100-quantiles are called percentiles

1a Galton: Quartiles

0% 25% 50% 75% 100%

896.0 1162.5 1208.0 1236.0 1516.0 Interpretation: What does the value at 25% mean?

The 25% of all values are lower than the value. 75% are larger.

1a Galton: 20-quantiles

0% 5% 10% 15% 20% 25% 30% 35% 40% 45% 50%

896.0 1078.3 1109.0 1126.9 1150.0 1162.5 1174.0 1181.0 1189.0 1199.0 1208.0

55% 60% 65% 70% 75% 80% 85% 90% 95% 100%

1214.0 1219.0 1225.0 1231.0 1236.0 1243.8 1255.1 1270.0 1293.0 1516.0

1b Viertelfest: Quartiles

0% 25% 50% 75% 100%

120 5000 9843 20000 29530000

1b Viertelfest: 20-quantiles

0% 5% 10% 15% 20% 25%

120.00 1213.25 2000.00 3115.00 4012.00 5000.00

30% 35% 40% 45% 50% 55%

5853.50 7000.00 7821.00 8705.25 9843.00 10967.50

60% 65% 70% 75% 80% 85%

12374.00 14444.00 16186.00 20000.00 27500.00 38000.00

90% 95% 100%

63649.50 99773.50 29530000.00

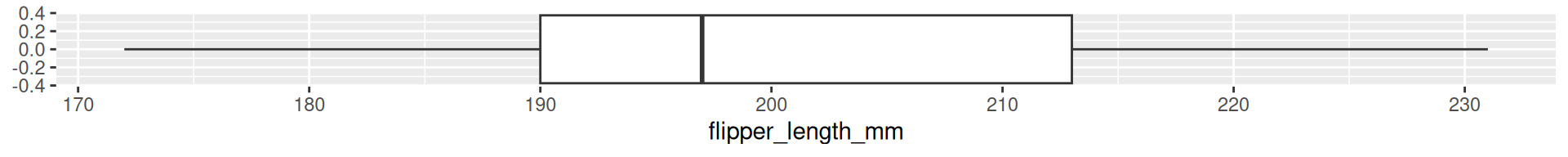

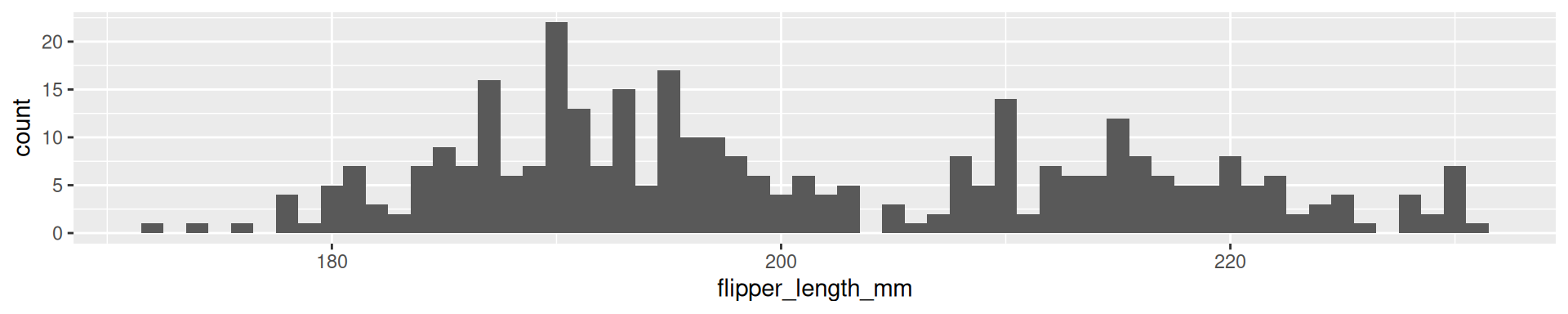

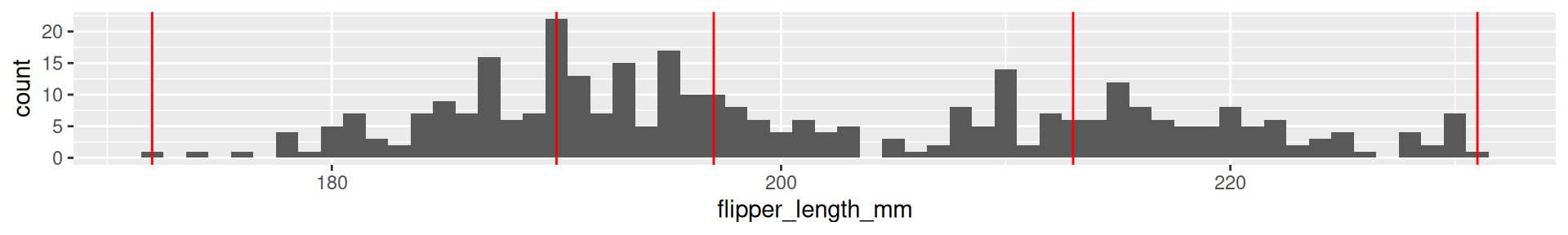

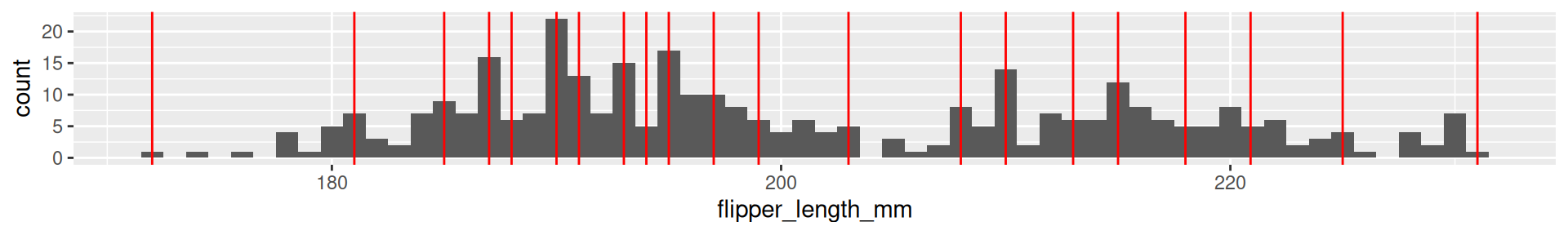

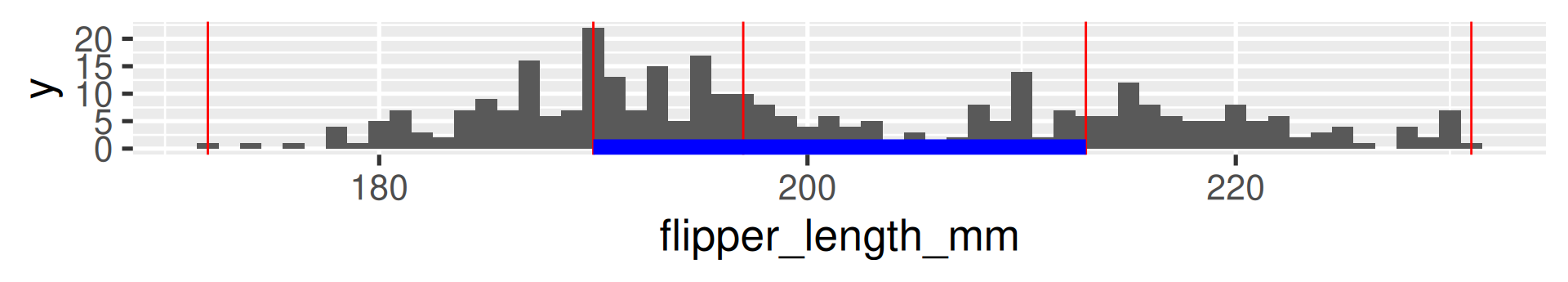

2 Palmer Penguins Flipper Length: Quartiles

0% 25% 50% 75% 100%

172 190 197 213 231

2 Palmer Penguins Flipper Length: 20-quantiles

0% 5% 10% 15% 20% 25% 30% 35% 40% 45% 50% 55% 60%

172.0 181.0 185.0 187.0 188.0 190.0 191.0 193.0 194.0 195.0 197.0 199.0 203.0

65% 70% 75% 80% 85% 90% 95% 100%

208.0 210.0 213.0 215.0 218.0 220.9 225.0 231.0

Interquartile range (IQR)

The difference between the 1st and the 3rd quartile. Alternative dispersion measure.

The range in which the middle 50% of the values are located.

Examples:

[1] 73.5[1] 73.58677[1] 15000[1] 848395.5[1] 23[1] 14.06171

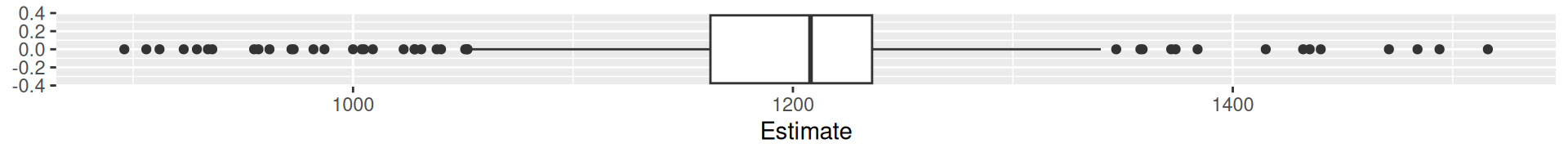

Boxplots

A condensed visualization of a distribution showing location, spread, skewness and outliers.

- The box shows the median in the middle and the other two quartiles as their borders.

- Whiskers: From above the upper quartile, a distance of 1.5 times the IQR is measured out and a whisker is drawn up to the largest observed data point from the dataset that falls within this distance. Similarly, for the lower quartile.

- Whiskers must end at an observed data point! (So lengths can differ.)

- All other values outside of box and whiskers are shown as points and often called outliers. (There may be none.)

Boxplots vs. histograms

- Histograms can show the shape of the distribution well, but not the summary statistics like the median.

Boxplots vs. histograms

- Boxplots can not show the patterns of bimodal or multimodal distributions.

More Summary Statistics

Minimizing proporties of Mean and Median

Mean minimizes the mean of squared deviations from it. No other value \(a\) has a lower mean of square distances from the data points. \(\frac{1}{n}\sum_{i=1}^n(x_i - a)^2\).

Median minimizes the sum of the absolute deviation. No other value \(a\) has a lower mean of absolute distances from the data points. \(\frac{1}{n}\sum_{i=1}^n|x_i - a|\).

The Concept of Minimizing

Is central for all statisitical fitting and learning procedures! These are among the simplest examples of this concept.

Two families of summary statistics

- Measures based on sums (related to mathematical moments)

- Mean

- Standard deviation

- Measures based on the ordered sequence of these observations (order statistics)

- Median (and all quantiles)

- Interquartile range

A hierarchy of moments

\(k\)th raw moment: \(\frac{1}{n}\sum_i^n x_i^k\).

- The mean is the 1st raw moment (because no exponents appear in formula)

- The variance is the 2nd raw moment the mean-shifted \(x\)

- The 3rd moment appears in the definition of the skewness of \(x\)

- The 4th moment appears in the definition of the kurtosis of \(x\)

Skewness

The skewness of a distribution is a measure of its asymmetry.

Equation: \(\text{skewness} = \frac{\frac{1}{n}\sum_{i=1}^n(x_i - \mu)^3}{\left(\frac{1}{n}\sum_{i=1}^n(x_i - \mu)^2\right)^{3/2}}\)

- Positive skewness: The right tail is longer or fatter than the left tail.

- Negative skewness: The left tail is longer or fatter than the right tail.

The relation of mean and median can give a hint on skewness!

The Viertelfest data is heavily positively skew. (The Galton data is a little bit negatively skew, but it is barely visible.)

Kurtosis

The kurtosis of a distribution is a measure of the “tailedness” of the distribution. It often goes along with also higher “peakedness”.

Equation: \(\text{kurtosis} = \frac{\frac{1}{n}\sum_{i=1}^n(x_i - \mu)^4}{\left(\frac{1}{n}\sum_{i=1}^n(x_i - \mu)^2\right)^{2}}\)

- Leptokurtic: Fatter tails and a higher peak than the normal distribution.

- Platykurtic: Thinner tails and a lower peak than the normal distribution.

A logarithmic y-axis shows the fatter tails!

A logarithmic y-axis shows the fatter tails!

Bivariate Summary Statistics

Covariance

Goal: We want to measure the joint variation in numerical observations of two variables \(x_1,\dots,x_n\) and \(y_1, \dots, y_n\).

Covariance

\(\text{cov}(x,y) = \frac{1}{n}\sum_{i=1}^n(x_i - \mu_x)(y_i - \mu_y)\)

where \(\mu_x\) and \(\mu_y\) are the arithmetic means of \(x\) and \(y\).

Note: \(\text{cov}(x,x) = \frac{1}{n}\sum_{i=1}^n(x_i - \mu_x)(x_i - \mu_x) = \frac{1}{n}\sum_{i=1}^n(x_i - \mu_x)^2 = \text{Var}(x)\)

Correlation

Goal: We want to measure the linear correlation in numerical observations of two variables \(x_1,\dots,x_n\) and \(y_1, \dots, y_n\).

Pearson correlation coefficient \(r_{xy} = \frac{\sum_{i=1}^n(x_i - \mu_x)(y_i - \mu_y)}{\sqrt{\sum_{i=1}^n(x_i - \mu_x)^2}\sqrt{\sum_{i=1}^n(y_i - \mu_y)^2}}\)

(Note: Do you are missing $ terms compared to covariance? They all cancel out!)

Relation to covariance: \(r_{xy} = \frac{\text{cov}(x,y)}{\sigma_x\sigma_y}\)

where \(\sigma_x\) and \(\sigma_y\) are the standard deviations of \(x\) and \(y\).

Relation to standard scores:

When \(x\) and \(y\) are standard scores (each with mean zero and standard deviation one), then \(\text{cov}(x,y) = r_{xy}\).

Interpretation of correlation

Correlation between two vectors \(x\) and \(y\) is “normalized”.

- The maximal possible values is \(r_{xy} = 1\)

- \(x\) and \(y\) are fully correlated

- The minimal values is \(r_{xy} = -1\)

- \(x\) and \(y\) are anticorrelated

- \(r_{xy} \approx 0\) mean

- the variables are uncorrelated

- \(r_{xy} = r_{yx}\)

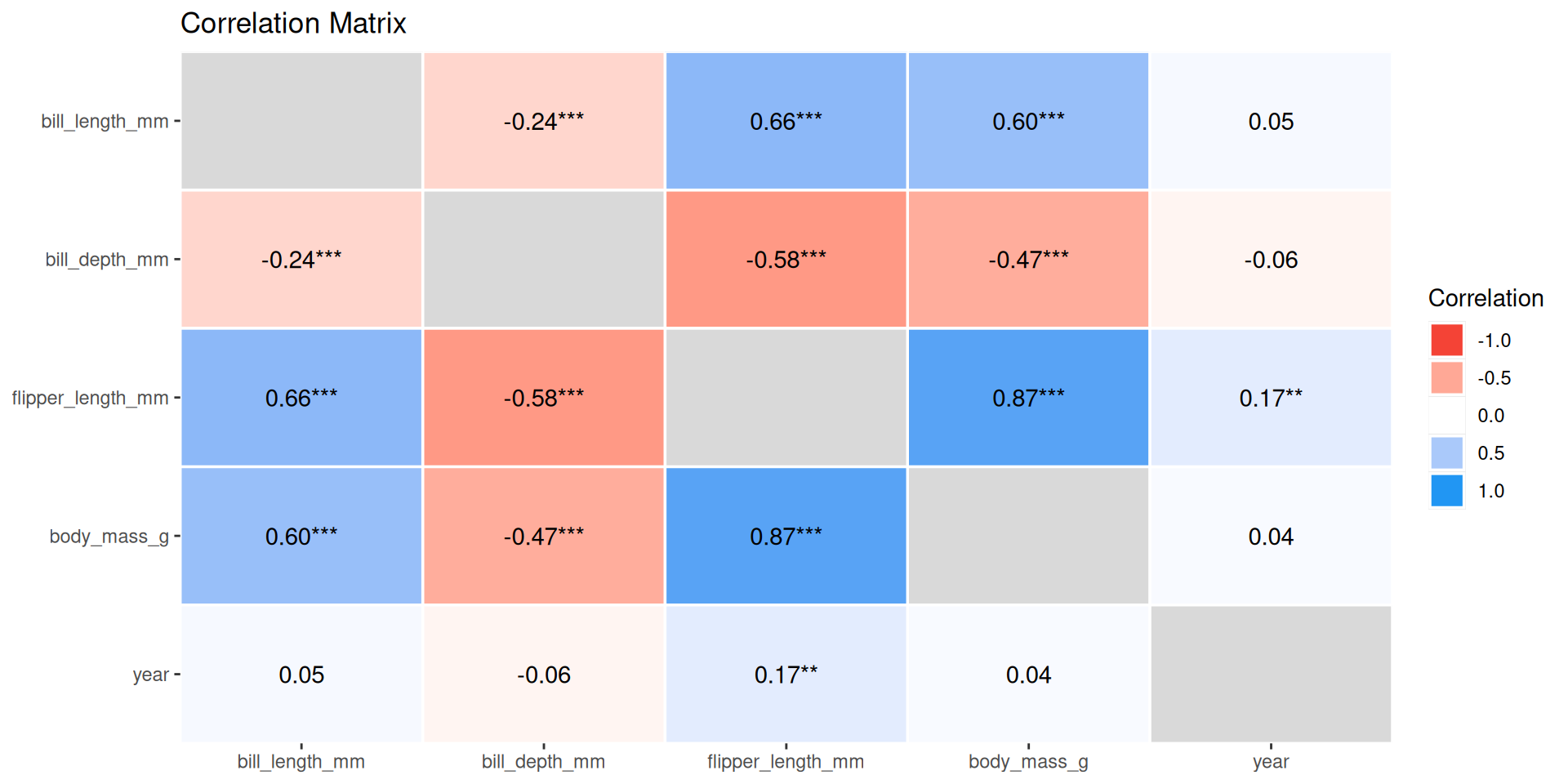

Correlation matrix

Using corrr from the packages tidymodels

# A tibble: 5 × 6

term bill_length_mm bill_depth_mm flipper_length_mm body_mass_g year

<chr> <dbl> <dbl> <dbl> <dbl> <dbl>

1 bill_lengt… NA -0.235 0.656 0.595 0.0545

2 bill_depth… -0.235 NA -0.584 -0.472 -0.0604

3 flipper_le… 0.656 -0.584 NA 0.871 0.170

4 body_mass_g 0.595 -0.472 0.871 NA 0.0422

5 year 0.0545 -0.0604 0.170 0.0422 NA Correlation table

Using correlation from the packages correlation

library(correlation)

results <- palmerpenguins::penguins |>

select(-species, -island, -sex) |>

correlation()

results# Correlation Matrix (pearson-method)

Parameter1 | Parameter2 | r | 95% CI | t(340) | p

-----------------------------------------------------------------------------------

bill_length_mm | bill_depth_mm | -0.24 | [-0.33, -0.13] | -4.46 | < .001***

bill_length_mm | flipper_length_mm | 0.66 | [ 0.59, 0.71] | 16.03 | < .001***

bill_length_mm | body_mass_g | 0.60 | [ 0.52, 0.66] | 13.65 | < .001***

bill_length_mm | year | 0.05 | [-0.05, 0.16] | 1.01 | 0.797

bill_depth_mm | flipper_length_mm | -0.58 | [-0.65, -0.51] | -13.26 | < .001***

bill_depth_mm | body_mass_g | -0.47 | [-0.55, -0.39] | -9.87 | < .001***

bill_depth_mm | year | -0.06 | [-0.17, 0.05] | -1.11 | 0.797

flipper_length_mm | body_mass_g | 0.87 | [ 0.84, 0.89] | 32.72 | < .001***

flipper_length_mm | year | 0.17 | [ 0.06, 0.27] | 3.17 | 0.007**

body_mass_g | year | 0.04 | [-0.06, 0.15] | 0.78 | 0.797

p-value adjustment method: Holm (1979)

Observations: 342Correlation visualization

Exploratory Data Analysis

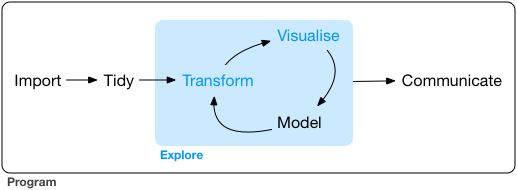

Exploratory Data Analysis

EDA is the systematic exploration of data using

- visualization

- transformation

- computation of characteristic values

- modeling

Systematic but no standard routine

“There are no routine statistical questions, only questionable statistical routines.” — Sir David Cox

“Far better an approximate answer to the right question, which is often vague, than an exact answer to the wrong question, which can always be made precise.” — John Tukey

Systematic but no standard routine

- Goal of EDA: Develop understanding of your data.

- EDA’s iterative cycle

- Generate questions about your data.

- Search for answers by visualizing, transforming, and modelling your data.

- Use what you learn to refine your questions and/or generate new questions.

- EDA is fundamentally a creative process.

Questions

- The way to ask quality questions:

- Generate many questions!

- You cannot come up with most interesting questions when you start.

- There is no rule which questions to ask. These are useful

- What type of variation occurs within my variables?

(Barplots, Histograms,…) - What type of covariation occurs between my variables?

(Scatterplots, Timelines,…)

- What type of variation occurs within my variables?

EDA embedded in a statistical data science project

- Stating and refining the question

- Exploring the data

- Building formal statistical models

- Interpreting the results

- Communicating the results

Data science projects

Outline of question-driven data work

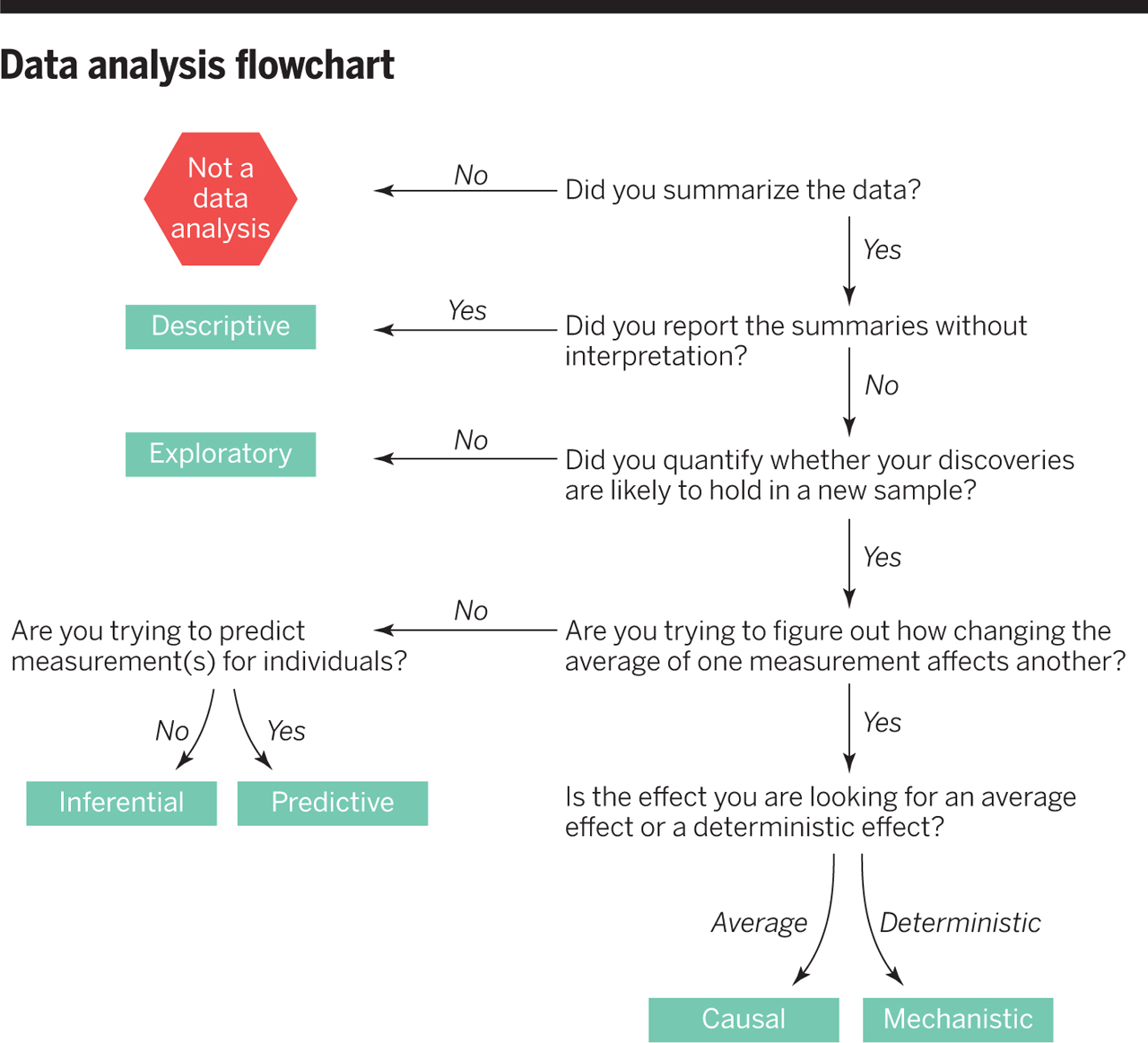

Six types of questions

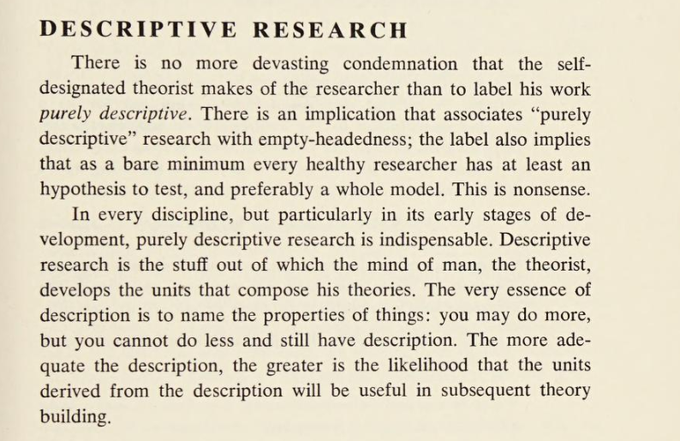

- Descriptive: summarize a characteristic of a set of data

- Exploratory: analyze to see if there are patterns, trends, or relationships between variables (hypothesis generating)

- Inferential: analyze patterns, trends, or relationships in representative data from a population

- Predictive: make predictions for individuals or groups of individuals

- Causal: whether changing one factor will change another factor, on average, in a population

- Mechanistic: explore “how” one factor (probably/most likely/potentially) changes another

We only did 1 and 2, so far.

Descriptive Projects

Data Analysis Flowchart

Example: COVID-19 and Vitamin D

- Descriptive: frequency of hospitalisations due to COVID-19 in a set of data collected from a group of individuals

- Exploratory: examine relationships between a range of dietary factors and COVID-19 hospitalisations

- Inferential: examine whether any relationship between taking Vitamin D supplements and COVID-19 hospitalisations found in the sample hold for the population at large

- Predictive: what types of people will take Vitamin D supplements during the next year

- Causal: whether people with COVID-19 who were randomly assigned to take Vitamin D supplements or those who were not are hospitalised

- Mechanistic: how increased vitamin D intake leads to a reduction in the number of viral illnesses

Questions to questions

- Do you have appropriate data to answer your question?

- Do you have information on confounding variables?

- Was the data you’re working with collected in a way that introduces bias?

Context Information is important!

- Not all information is in the data!

- Potential confounding variables you infer from general knowledge

- Information about data collection you may receive from an accompanying report

- Information about computed variables you may need to look up in accompanying documentation

- Information about certain variables you may find in an accompanying codebook. For example the exact wording of questions in survey data.

MDSSB-DSCO-02: Data Science Concepts